Commentary

Ava, The Duchess Of Native Botvertising

- by Ted McConnell , Featured Contributor, January 21, 2016

On Dec. 22, the Federal Trade Commission told us that advertisements that are not identifiable as advertisements are deceptive. They included a list of content that applies, but what’s an ad: any communication with commercial intent? A conversation?

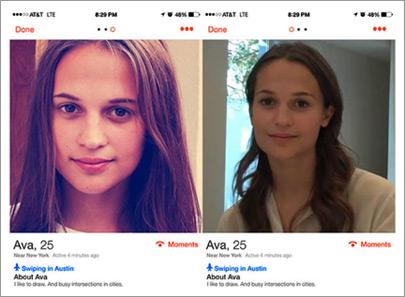

As reported elsewhere, at SWSX last year, a bunch of unsuspecting Tinder users tried to date the lovely Ava. She’s real, and she’s a robot, and she wants you to check out a movie site.

Ava, according to a Tinder user in Austin, is a real person whose picture is used to draw men to a site about a movie in which she is cast. She probably is nice, but her bot-self is manipulative. When you chat with her she seems, well, pretty normal, until she suggests you check out her site, and abruptly terminates the conversation.

Brilliant or horrific, she’s the tip of the iceberg. And, yes, Tinder bots are not exactly news — but bottom line, Ava might be the poster child for a new ad model. What ad model? Let’s parse.

advertisement

advertisement

One thing for sure is: Ava’s content. We also know she’s selling something. But she doesn’t tell you that until you are sucked in completely. She fits uniquely with the platform she’s embedded in. So that would make her native advertising! I suppose she is a violation of FTC guidelines, but we kind of like her anyway. Maybe, to men, she’s a game, so she’s like in-game advertising. But wait!

She’s select-in media, with a swipe like a click, with the implied promise of a quick drink and a tete-a-tete in an Austin Hotel.

But is she scalable? Sure. Tinder connects like a million matching swipes a day. The obvious play is to train one of these things to be you, saving you the aggravation of dealing with the post-swipe sterile exchange of information with Ava clones. Then the dating game will be about how cool your bot-self is compared to another bot-self. Gulp. The Web will get clogged up with bots trying to seduce other bots.

Already, according to Augustine Fou, Web security guru, Tinder bots are a part of a broader scheme trying to pry bits of information out of swipe-happy date-seekers. The intent is to fill gaps in the databases of bad guys. Place of birth? “I was born in Utah, where were you born?” “I have a dog, what is your dog’s name?” These facts come in handy for hacking security questions. Basically, the bad guys are enriching their profiles.

It’s easy for a harmless girl to pry a few small facts out of a lonely dude. Except for this: She’s not a girl, and she’s not harmless. She’s a silicon construct designed to make you forget you are talking to a computer. The original honey pot, dressed in pixels.

This is a social telltale, and it’s easy to see where it’s pointing. Conversation will become a progressively weaker hallmark of trust. Our children, who are already skeptical of almost anything, will become even more skeptical. The trend of virtual relationships will level off — and sadly, all the discovery and diversity that goes with it will too. The good news is, actual human contact will become more valuable. Trust, the ultimate asset, will be built more carefully, and social proof will matter more. In the meantime, maybe we get a new ad model out of it.

So, thank you Ava, for dragging our sorry selves 12 steps closer to the Internet advertising end game: programmatic, self-aware, conversational, robotic, seduction-based one-to-one native content marketing. This bot’s for you!

Here we have another reason to conduct a background check or similar report before you ever actually meet someone (a stranger at first) for a date.

I wonder if Alicia Vikander knows her photos are being used for that bot.

Mindy ... I hope so, since Ava was the character she played in Ex Machina, and the advertisement she lead the viewer to was for Ex Machina, which was launching at the time.